Valerio Biscione

Personal Website

Getting started with Conditional Random Fields

This is the first of a series of post that I am going to write about Conditional Random Fields. I am recently following the excellent Coursera Specialization on Probabilistic Graphical Models (the videos for each course are freely accessible), and I found the topic really interesting. However, I felt that the time dedicated to Conditional Random Fields (CRF from now on) was decisively short, considering that this (and the evolution of this) model is been using in several applications nowadays: apart from the classic Part-of-speech tagging, it is used in phone recognition and gesture recognition (note that in this work Hidden Conditional Random Fields are used, a model which we will talk about in a future post)

Of course, this is not the only introduction on the internet that you can find on CRF: Sutton and McCallum wrote a excellent article (here), and another introduction can be found in Edwin Chen blog (here). However, Sutton and McCallum article appeared to me to be too hard for a novice that just started in the field; Edwin Chen blog assumes some knowledge of graphical models, and does not provide any code. Conversely, this gentle introduction will not assume any knowledge on probabilistic graphical model (but it does assume some basic of Probabilistic Analysis), and will provide some code for solving a simple problem that we all face: how can we tell if our cat is happy? By using Conditional Random Fields, of course!

I will use Python as a language of choice, with the nice pgmpy library. Installing pgmpy was completely painless, (just follow the instructions!). This guide is not going to be a formal introduction (for that you have many other sources you can dig in), but an informal, aimed more to convey the insight behind the model than to be mathematically precise.

So, what are Conditional Random Fields?

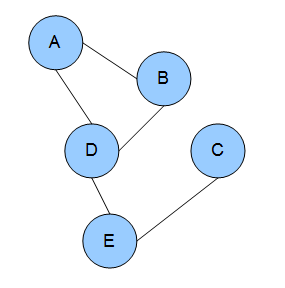

Let's make some classification. Conditional Random Fields is a type of Markov Network. Markov Networks are models in which the connection between events are defined by a graphical structure, as shown in the next Figure.

Each node represents a random variable, and the edges between nodes represent dependency. With Markov Networks is very convenient to describe these dependencies using factors ( ). Factors are positive values describing the strength of associations between variables. I know what you are thinking: are these probabilities? Or conditional probabilities? Nope, nope, they are not*, and in fact they are not bounded from 0 to 1, but can take any positive value (in some cases they could be equivalent to conditional probabilities, but this is in general not true).

). Factors are positive values describing the strength of associations between variables. I know what you are thinking: are these probabilities? Or conditional probabilities? Nope, nope, they are not*, and in fact they are not bounded from 0 to 1, but can take any positive value (in some cases they could be equivalent to conditional probabilities, but this is in general not true).

CRF are defined as discriminative model. These are models used when we have a set of unobserved random variables Y and a set of observation X, and we want to know the probability of Y given X. Quite often we want to ask a different question: what are the assignment to the Y variables that most likely generated the observations X? In particular, CRF are very useful when we want to do classification by taking into account neighboring samples. For example, let's say that we want to classify sections of a musical piece. You know that different sections are related, as there is a very strong chance that the exposition will come after the introduction. Well, CRF are perfect for doing that, as you can incorporate these knowledge in them. Not only! You can also incorporate arbitrary feature describing information that you know exists in the data. For example, you know that after a certain chord progression, you are quite likely going into the development section. Well, you can introduce that info as well in your model.

But for now, let's drop this music nonsense, and let's talk about something unquestionably more stringent:

Is your cat happy?

Everybody loves cats. They are cute, fluffy, and adorable. Not everybody knows how to tell if a cat is sad or happy. Well, you will soon be able to, with a little help from your friendly Conditional Random Fields!

Let's say that you have a very simple cat, Felix. He won't experience complex emotions such as anger, joy, or disgust. He will just be "happy" or "sad". You read on your favourite cats magazine that cats express contentedness with "purrs". However, some time, "purrs" may be expression of tensions. Felix, begin a simple cat, doesn't know what those words mean, and he uses "purrs" for expressing happiness or sadness.

We are going to observe Felix several times a day, and every time we are going to notice if he is purring or not. This will be our observation vector  . We know that our observation can tell us something about Felix's internal state at each time

. We know that our observation can tell us something about Felix's internal state at each time  (let's call this set

(let's call this set  ). So, if we observe that Felix is purring at this moment, we can more or less confidently say that Felix is happy. Pretty simple, right? Now is where it gets interesting.

). So, if we observe that Felix is purring at this moment, we can more or less confidently say that Felix is happy. Pretty simple, right? Now is where it gets interesting.

The important concept about Conditional Random Fields is that we can also specify dependencies between unobserved states. For example, we may know that our Felix is a pretty relaxed cat: his emotional states are quite stable, and we know that if at some point in time he was happy (or sad), he will most likely be happy (or sad) at the next point in time. On the other hand, we may know that our Felix is an histrionic diva, changing his mind at the blink of an eye. We can model this relationship as well.

With this information, we can build our graph:

As explained,  are the observation, and each

are the observation, and each  is connected with Felix internal state (

is connected with Felix internal state ( ) at time

) at time  . The internal states are also connected in pair. In this and the following example we are going to observe Felix 3 times (

. The internal states are also connected in pair. In this and the following example we are going to observe Felix 3 times ( ). Notice that each state is only affected by the previous one (our Felix doesn't seem to care about his internal state long time ago, but he is only affected by what he was feeling recently). This simple network is called a linear CRF.

). Notice that each state is only affected by the previous one (our Felix doesn't seem to care about his internal state long time ago, but he is only affected by what he was feeling recently). This simple network is called a linear CRF.

Now we also have to come up with some idea about the relationship between Felix's purring and his level of happiness. Let's say that we come up with this table:

The values are in an arbitrary scale, and they indicate the "strength" of the relationship. Note that these are not probabilities. To emphasise that, I didn't scale those from 0 to 1 (but your are free to do so). From the table we can see that Felix can express his emotion more strongly when he is purring. If he is purring, we can be say quite surely that he is happy. If he is not purring, we can say that he is sad, but the strength of this belief is not so strong: we are convinced '25' [whatever scale that is] that he is sad, and '15' that he is happy. We will call this factor

Now we have to specify the transition probability: if Felix is happy now, what's the chance that he is going to be happy later on? For the time being, we want to ignore this dependency and just get everything to work, so we will build this uninformative transitional matrix

(notice that the value can be anything, as far as it's the same for each entry in the table). The row variables  indicates the emotional state at time

indicates the emotional state at time  ; the

; the  the emotional state at time

the emotional state at time  . We will call this factor

. We will call this factor  . This is how the network looks like with the factors:

. This is how the network looks like with the factors:

Let's see how we can implement this in Python. We are going take 3 measures for now, so our network will only have 3 unobserved states and 3 observed states:

[to run this code you need Python2.7, pgmpy, and numpy]

from pgmpy.models import MarkovModel

from pgmpy.factors.discrete import DiscreteFactor

import numpy as np

from pgmpy.inference import BeliefPropagation

def toVal(string):

if string=='purr':

return 0;

else:

return 1;

catState=['happy','sad']

MM=MarkovModel();

# non existing nodes will be automatically created

MM.add_edges_from([('f1', 'f2'), ('f2', 'f3'),('o1','f1'),('o2','f2'),('o3','f3')])

#NO DEPENDENCY

transition=np.array([10, 10, 10, 10]);

#RELAXED CAT

#transition=np.array([90,10,10,90]);

#DIVA CAT

#transition=np.array([10, 90, 90, 10]);

purr_happy=80;

purr_sad=20

noPurr_happy=15;

noPurr_sad=25;

factorObs1= DiscreteFactor(['o1','f1'],cardinality=[2, 2], \

values=np.array([purr_happy, purr_sad, noPurr_happy,noPurr_sad]))

factorObs2= DiscreteFactor(['o2','f2'],cardinality=[2, 2], \

values=np.array([purr_happy, purr_sad, noPurr_happy,noPurr_sad]))

factorObs3= DiscreteFactor(['o3','f3'],cardinality=[2, 2], \

values=np.array([purr_happy, purr_sad, noPurr_happy, noPurr_sad]))

factorH1= DiscreteFactor(['f1','f2'],cardinality=[2, 2], values=transition)

factorH2= DiscreteFactor(['f2','f3'],cardinality=[2, 2], values=transition)

#factor.values

MM.add_factors(factorH1)

MM.add_factors(factorH2)

MM.add_factors(factorObs1)

MM.add_factors(factorObs2)

MM.add_factors(factorObs3)

Note the peculiar way of inserting the entry for each factor. We take the conditional probability table, starting from the first row, and we proceed from left to right before going to the next row. We can have a more friendly representation by calling factorObs1.values(). If you are wondering what does cardinality means, it's just the number of values that each variable in the factor can take. In our case, we are working with binary variables, so cardinality will always be 2.

Now we are going to do some magic. By using an inference algorithm called Belief Propagation we will be able to estimate the probability of each internal state. However, we are not exactly interested in that, but we want to know what is the most likely internal state given our purring observations. The procedure for this is called MAP, and there are different ways of implementing that. We are going to use the MAP included in the Belief Propagation algorithm. Notice how in the code we specify what are the values of our observed variables (evidence=...). The function toVal maps "purr" to the entry 0 and "no_purr" to the entry 1.

belief_propagation = BeliefPropagation(MM)

ymax=belief_propagation.map_query(variables=['f1','f2','f3'],\

evidence={'o1' : toVal('purr'), 'o2' : toVal('no_purr'), 'o3' : toVal('purr')})

#ymax=belief_propagation.map_query(variables=['f1','f2','f3'],\

#evidence={'o1' : toVal('purr'), 'o2' : toVal('no_purr'), 'o3' : toVal('no_purr')})

#ymax=belief_propagation.map_query(variables=['f1','f2','f3'], \

#evidence={'o1' : toVal('purr'), 'o2' : toVal('purr'), 'o3' : toVal('purr')})

print('f1: ' + str(ymax['f1']) + ' = ' + catState[ymax['f1']]);

print('f2: ' + str(ymax['f2']) + ' = ' + catState[ymax['f2']]);

print('f3: ' + str(ymax['f3']) + ' = ' + catState[ymax['f3']]);

In our case, we observed a sequence of purr, no_purr, and purr again. As we have not specified any dependencies between states, the network is going to tell us that our cat was happy, sad, and happy again. Is this the case, though? Let's take a more realistic approach to this fluffy problem.

Felix as a cool, relaxed cat

Let's consider the first case: Felix is a cool dude, and when he is in a state, he is probably going to stay there for quite long time. We are going to use this transition factor table:

Which clearly tell us that there is a strong connection between being happy (or sad) at time  , and being happy (or sad) at time

, and being happy (or sad) at time  . To generate this network, uncomment line 22 (from the first snippet), and run the script again. What is going to happen now? All the three states are now classified as "happy"! The lack of purr in the middle state, being less strongly connected with his level of happiness, has been overcome by the neighbour states, which more surely indicates that Felix was happy.

. To generate this network, uncomment line 22 (from the first snippet), and run the script again. What is going to happen now? All the three states are now classified as "happy"! The lack of purr in the middle state, being less strongly connected with his level of happiness, has been overcome by the neighbour states, which more surely indicates that Felix was happy.

Try instead to set the sequence  (uncomment line 4 and 5 from the second snippet). What's happening now? Think about what the network is computing, and check if it makes sense.

(uncomment line 4 and 5 from the second snippet). What's happening now? Think about what the network is computing, and check if it makes sense.

Felix as a diva

Let's consider the situation where Felix is a diva, and he is more likely to change his mood along different observations. We can represent this by using the following transition table:

Which favours transition from sad to happy or from happy to sad. Now let's say that we observe our Felix purring all the time. Being him a crazy diva that he is, can we infer that he is indeed happy all the time? No way! In fact, if you uncomment line 25 from the first snippet and line 6 and 7 from the second snippet, and you will see that even though the observed sequence is  , the most likely state appears to be

, the most likely state appears to be  . Oh Felix, you are driving me crazy!

. Oh Felix, you are driving me crazy!

This simple example shows only the basics of the potential of CRF. This is only the beginning. We may want to automatically calculate the transition table, given the observation. Or we may want to include more complicated observation, or generate a non-linear graph. We can do all of this and much more with CRF.

In the next post I hope to use more complicated models to show other cool features of this approach. Hope you enjoyed it!